Most still misunderstand where the value of AI really is

This article is written by Renessai’s co-founder Ossi Syd.

I've been sitting with a radical question lately: Does the general public systematically and repeatedly misunderstand the nature of the value AI actually creates?

Bold claim. Let me try to make the case for one possible interpretation.

Language models have dominated the current AI wave and hype cycle. Interacting with them creates an illusion of human-likeness – and in many ways, it truly is a giant leap compared to previous human-machine interaction. Language models are built in a way that means they rarely admit they don't know something. A wrong answer comes with the same confidence and level of detail as a correct one.

At the same time, the concept of "agentic AI" has exploded. I've long since started reading the conversation so that any AI that improves any process in any way is called an agent. And almost everything else just gets labelled "AI agent" for commercial reasons.

Painting with a broad brush: We regularly read articles analysing whether AI (meaning a language model) solved some problem 70% or 95% correctly. Or whether prompting and iteration actually took more time than the traditional approach. Or whether the ROI was really better than the old way.

It's not about one trick

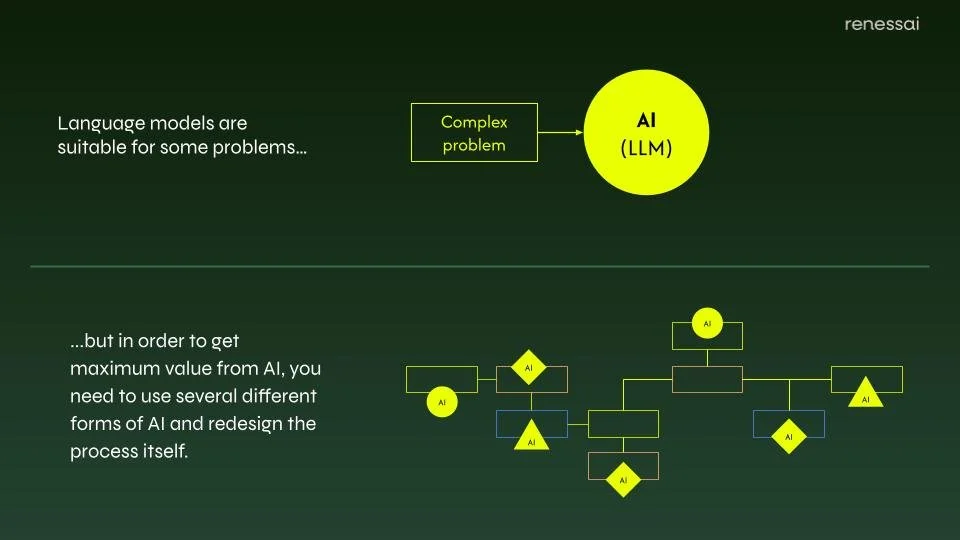

My argument is that this thinking is monolithic: there is some complex "human task" that people try to solve by dumping it wholesale into a language model. Depending on how well or poorly that seems to go, we judge AI's potential in that problem domain.

The far more interesting scenario – and the real source of value – looks like this:

Take a complex, real-world process that involves many different forms of human work and classical IT: deterministic rule-following alongside non-deterministic "creativity" and "intuition".

Comb through that process and identify the distinct ways value is actually created*. For each value-creation mechanism, select the AI paradigm that enhances it most effectively – across a wide spectrum.

Sometimes you'll be closer to advanced mathematics and optimisation**; sometimes closer to neural networks and language models.

Depending on case, other forms of AI than LLMs can bring far more value for business.

Against that backdrop, develop a set of targeted AI enhancements, roll them out following best practices in modularisation and change management, and measure the systemic impact of each change as you go.

The range is wide: It's easy to imagine large industrial processes that need no language models at all. Or creative processes where language models are absolutely central – a familiar and obvious example is augmenting software development.

The form of human interaction also varies greatly, or there may be none at all. It is worth noting, however, that in many contexts, prompting as we understand it today is rare.

This framing also gets rid of the stumbling block of monolithic thinking – can AI (again, the speaker is actually referring to a language model) solve this complex whole well or not? It doesn't need to. If we improve ten different stages of a process by 3% each, using different AI paradigms, the cumulative effect can be substantial.

This is a genuinely central aspect of understanding AI transformation. Note that I'm not dismissing language models – they are remarkable tools for many things. I'm simply zooming out to see the systemic change more clearly.

AI ≠ LLMs

Most businesses – mistakenly – think prompting with LLMs will transform their business as a single Silver Bullet solution.

It's already here

In recent months, we have seen in our practical client conversations this shift of attention from AI automation of individual tasks to the redesign of entire processes (or equivalent end-to-end entities).

The essential principles for getting started:

modularize the process so that it can be changed piece by piece.

Choose the appropriate AI paradigms based on the type of value creation involved (it’s not always about automation).

Measure the change so that you know which direction the ship is turning.

We have observed this approach unlock the floodgates of thinking and action particularly in organizations operating in industries where there is no single obvious application for (LLM-like) AI – such as construction or manufacturing.

* It's often worth talking specifically about the ways value is created "behind" processes, rather than the processes themselves. Process documentation tends to carry thick layers of assumptions, organisational folklore, and ingrained habits.

** I'm aware that mathematical optimisation is not necessarily AI in the academic sense. I gave up that argument a long time ago. Some professors are still fighting for doctrinal purity – more power to them.